In this blog, I will cover WaveFront APM community and enterprise edition. It’s a SAAS based cloud service. I will explain security aspects in detail when transmitting monitoring data from organization’s on-prem, private and public clouds. WaveFront Doesn’t send application logs and user data to SAAS cloud. You can add a WaveFront proxy to mask and filter data based on organization’s security policy.

To know fundamentals and other information about WaveFront and it’s technical architecture, please read my other blog-

Security with WaveFront SAAS

Wavefront is secured by design. It only uses these monitoring data from organization’s on-prem data centers/cloud Availability zones (AZs).

- Metrices

- Traces & Spans

- Histogram

There are multiple ways to protect privacy of data on SAAS cloud when data is transmitted from applications and infrastructure servers to the cloud. It’ SAFE to use.

Secure your data with WaveFront Proxy

Wavefront provides these features to secure your data when monitoring your apps/Infra:

Note: It also works on-air gapped environment (Offline with No Internet connectivity). You need to setup a separate VM with public Internet connection which will have a WaveFront (PO) Proxy running. WaveFront agents will push all stats Kubernetes and VM clusters to main WaveFront SAAS cloud and telemetry data will be transmitted from this VM/BM machine to WaveFront cloud SAAS.

Secure By Design

- WaveFront does’t read and transmit application, user and database logs and send application logs.

- All local matrices data will be stored at WaveFront Proxy with local persistence/databases

- Intrusion detection & response

- Securely stores username/password information

- Does NOT collect information about individual users

- Do NOT install agents that collect user information NONE of the built-in integrations collect user information

- Currently uses AWS to run the Wavefront service and to store customer application data The AWS data centres incorporate physical protection against environmental risks

- The service is served from a single AWS region spread across multiple availability zones for failover

- All incoming and outgoing traffic is encrypted. Wavefront customer environments are isolated from each other. Data is stored on encrypted data volumes.

- Wavefront customer environments are isolated from each other.

- Data is stored on encrypted data volumes.

- Wavefront development, QA, and production use separate equipment and environments and are managed by separate teams.

- Customers retain control and ownership of their content. It doesn’t replicate customer content unless the customer asks for it explicitly.

User and role based Security – Authentication and Authorization

- User & service account Authentication (SSO, LDAP, SAML, MFA). For SSO, it supports Okta, Google ID, AzureAD. User must be authenticated using login credentials and API call also authenticated thru secure auto expiry token.

- Authentication using secret token & authorization (RBAC, ACL)

- It supports user role and service account also

- Roles & groups access management

- Users in different teams inside the company can authenticate to different tenants and cannot access the other tenant’s data.

- Wavefront supports multi-level authorization:

- Roles and permissions

- Access control

- Wavefront supports a high security mode where only the object creator and Super Admin user can view and modify new dashboards.

- If you use the REST API, you must pass in an API token and must also have the necessary permissions to perform the task, for example, Dashboard permissions to modify dashboards.

- If you use direct ingestion you are required to pass in an API token and most also have the Direct Data Ingestion permission.

How it protects user data

- Mask the monitoring data with different name to maintain privacy

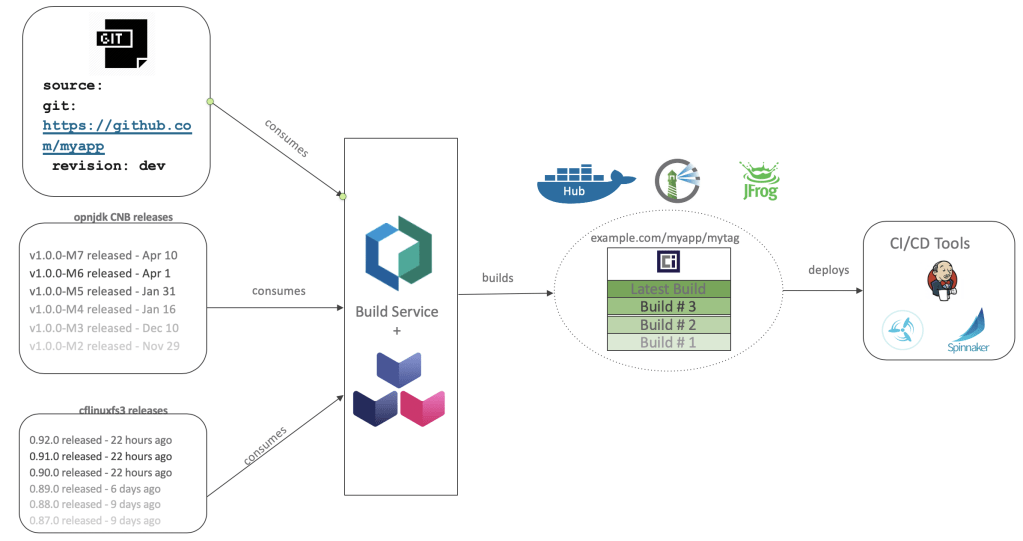

- WaveFront agent runs at VMs which captures the data and send to WaveFront Proxy first, where filtering/masking logic can be applied, then filtered/masked data are being transmitted to WaveFront SAAS cloud for analytics and dashboards

- It also provides separate private cloud/separate physical VM boxes to store customer’s data securely

- It isolates customer’s data on SAAS cloud and never expose to other customers

- Data can be filtered before sending to WaveFront SAAS server

- Secure transit over Internet with HTTPS/SSL

- Data is stored on encrypted data volumes

- Protect all data traffic with TLS (Transport Layer Security) and HTTPS

- Perform a manual install and place the Wavefront proxy behind an HTTP proxy.

- Use proxy configuration properties to set ports, connect times, and more

- Use a whitelist regx or blacklist regx to control traffic to the Wavefront proxy

- Data Mirroring- Application data is duplicated across two Availability Zones (AZ) in a single AWS region

References

- Doc: https://docs.wavefront.com/

- Integrations: https://www.wavefront.com/integrations/

- Doc, videos and integrations: https://docs.wavefront.com/?utm_source=home-page-menu&utm_medium=referral&utm_campaign=home-page-menu

- Demo1– https://www.youtube.com/watch?v=pgIXAId1Mag

- Demo2 – Microservices Observability with WaveFront – https://www.youtube.com/watch?v=CKP5rQoP8Sk

- SpringBoot Integration – https://docs.wavefront.com/wavefront_springboot.html

Courtesy:

- Rishi Sharda – https://www.linkedin.com/in/rsharda/

- Anil Gupta – https://www.linkedin.com/in/legraswindow/